AI for Beginners: What It Is, How It Works, and Where to Start in 2026

·9 min read

AI is everywhere, which makes it harder, not easier, to understand. The hype drowns out the explanation. You'll find breathless predictions about superintelligence sitting right next to tutorials that assume you already know what a neural network is. Neither is useful if you're starting from zero.

This guide is for people who want to actually understand what AI is, what the key ideas mean, and how to go from knowing nothing to having a real foundation. It's not going to be exhaustive — AI is a large field — but it will give you an accurate map.

What AI Actually Is

Artificial intelligence is a broad term for computer systems that perform tasks that typically require some form of human-like reasoning: recognizing images, understanding language, making recommendations, playing games.

That's the definition, but it doesn't tell you much about how it works. Here's the more useful frame: for most of its history, programming meant writing explicit rules. If the image has these pixel patterns, it's probably a cat. This approach works fine for simple, well-defined problems and breaks down completely for complex ones. You can't write rules to cover every way a cat might appear in a photo.

Modern AI — specifically machine learning — flips this. Instead of writing rules, you show the system thousands or millions of examples, and it figures out the patterns itself. You don't program the cat-recognition rules; you show the system 500,000 labeled cat photos and let it find its own representation of what "cat" looks like in data.

That's machine learning in one paragraph. Almost everything impressive in AI right now — including the large language models behind tools like ChatGPT and Claude — is a variation on this basic idea.

Key Concepts You Need to Understand

Machine Learning

Machine learning (ML) is the subset of AI that learns from data. A machine learning system is trained on examples, adjusts its internal parameters to minimize errors on those examples, and ends up with a model that can make predictions on new data it hasn't seen before.

It helps to think about what a "model" actually is. A trained ML model is essentially a large mathematical function — it takes inputs (pixels, words, numbers) and produces outputs (labels, predictions, scores). The training process finds the function parameters that produce the right outputs for the training data.

Training Data

Training data is the collection of examples the model learns from. This matters enormously. A model trained on photos from one country will perform worse on photos from another. A language model trained primarily on English text will handle other languages less reliably. The patterns a model learns are only as good as the data it was trained on.

This is why AI systems can fail in predictable ways, and why "garbage in, garbage out" is a genuine principle, not a cliché.

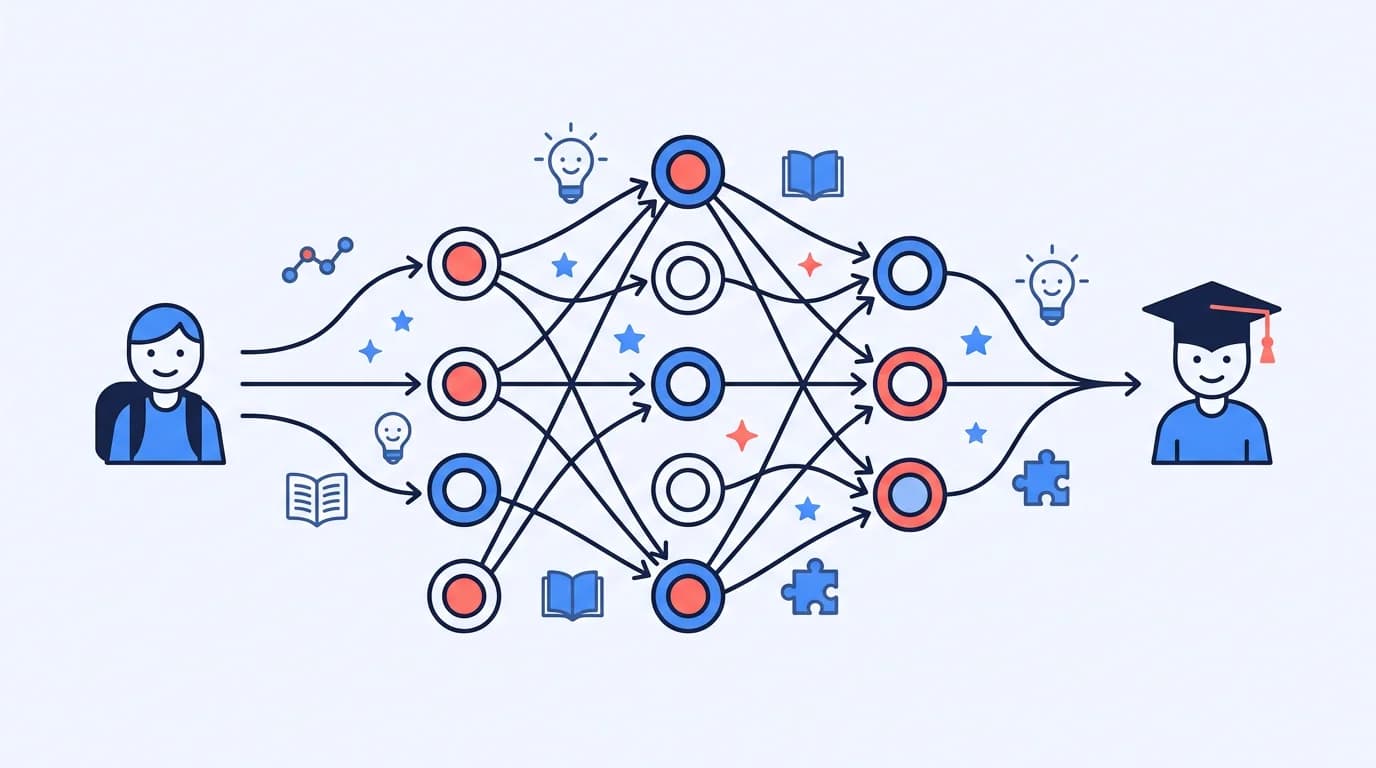

Neural Networks

Neural networks are the dominant approach in modern ML. They're loosely inspired by biological neurons — they consist of layers of simple mathematical units that each take inputs, apply a transformation, and pass the result to the next layer.

What makes neural networks powerful is that they're composed of many layers (hence "deep learning") and can learn surprisingly complex patterns. What makes them confusing is that the learned patterns aren't interpretable — a trained neural network doesn't produce a set of rules you can read; it produces millions of numerical parameters whose meaning isn't human-readable.

Inference

Training is when a model learns from data. Inference is when you use the trained model to make predictions. When you type a question into an AI tool and it gives you an answer, that's inference. When the AI system spent weeks processing billions of text examples to develop its capabilities — that was training.

This distinction matters because training is computationally expensive (it requires powerful hardware running for days or weeks) while inference is relatively cheap. You interact with AI at inference time.

Large Language Models (LLMs)

LLMs are the AI systems behind most of the text-based AI tools you've encountered. They're trained on enormous quantities of text data and learn to predict the next word (or more precisely, the next token) given what came before. This sounds simple, but a model that can predict text well enough across enough examples ends up developing surprisingly general capabilities — summarizing, translating, answering questions, writing code.

The "large" in LLM refers to scale: modern LLMs have billions or hundreds of billions of parameters, trained on trillions of words.

Common Misconceptions

AI understands things the way humans do. It doesn't. LLMs produce fluent text by predicting plausible continuations based on training data. That's impressive, and it produces useful outputs, but it's not the same as understanding. This is why LLMs can generate confident-sounding text that's factually wrong.

AI will solve everything or destroy everything. Both extremes are driven by projection onto a technology that's genuinely uncertain. Current AI systems are capable tools with real limitations — they're not gods and they're not demons.

You need a math PhD to work with AI. You don't. The field is broad. Understanding how to use AI tools requires no math. Building applications on top of AI APIs requires programming. Doing ML research requires math. You can choose your depth.

AI is just fancy automation. It's more interesting than that. AI systems exhibit emergent capabilities — abilities that weren't explicitly programmed and weren't always predicted from smaller models. They also fail in ways that pure automation doesn't, which matters for knowing when to trust them.

A Learning Path for Beginners

Here's a realistic sequence for going from beginner to having a genuine foundation. I'll flag where there's a genuine prerequisite versus where you can start immediately.

Step 1: Conceptual Foundation (Start Here)

Before touching code or math, build your mental model. Read broadly about what AI is, what it can and can't do, how it's being used. This is the stage where curiosity drives the work. A few resources that don't require prerequisites:

- "A Human's Guide to Machine Intelligence" by Kartik Hosanagar — readable, accurate, and practical

- 3Blue1Brown's neural network series on YouTube — visual, intuitive, and free

- The documentation and explanations published by major AI labs (Anthropic, OpenAI, Google DeepMind all publish accessible material)

Step 2: Python Basics

Python is the language of ML and AI. You don't need to become an expert programmer, but you need enough Python to run notebooks, manipulate data, and use libraries. You can get there in a few weeks of consistent practice.

Good starting points: Codecademy's Python course, Python.org's official tutorial, or the free book Automate the Boring Stuff with Python by Al Sweigart.

Step 3: ML Fundamentals

This is where the real learning begins. There are two widely respected starting points:

- Andrew Ng's Machine Learning Specialization on Coursera — structured, mathematically honest without being overwhelming, and probably the most widely recommended ML course for beginners. It's updated and covers modern approaches including neural networks.

- fast.ai's Practical Deep Learning for Coders — takes the opposite pedagogical approach: start with working code and build up intuition before formalizing. Many people find this more motivating.

Either is a good choice. The main thing is to pick one and complete it, not jump between resources.

Step 4: Mathematics (As Needed)

Linear algebra and calculus come up constantly in ML. You don't need to master them before starting — you can develop the math alongside the ML concepts. The 3Blue1Brown series on linear algebra and calculus is the best visual introduction available. Khan Academy covers the same ground in a more structured format.

Step 5: Build Something

No amount of coursework substitutes for building a project. A simple starting project: take a dataset from Kaggle, train a classifier on it, evaluate your results, and write up what you found. The act of running into real problems and solving them teaches things that courses don't.

The Retention Problem with AI Courses

One challenge with learning AI is that the material is genuinely dense. Concepts build on each other, the vocabulary is technical, and courses move fast. Many people make it through a course but find they haven't retained much by the time they try to apply it.

Active recall helps significantly here. If you're working through an AI course, don't just watch and take notes — test yourself on the concepts. CuFlow can help with this: upload your course notes or paste in material you're studying, and it generates flashcards and quizzes automatically. Working through quizzes on concepts like gradient descent, overfitting, or the difference between supervised and unsupervised learning is more effective than reviewing slides again.

Free Resources Worth Knowing

fast.ai — Free deep learning courses, practical-first approach, actively maintained. One of the most respected free resources in the field.

Coursera — Andrew Ng's courses — The Machine Learning Specialization and Deep Learning Specialization are available free to audit. Possibly the most structured path for beginners.

DeepLearning.AI short courses — Shorter, application-focused courses on specific topics like prompt engineering, LLMs, and MLOps. Good for filling specific gaps.

Kaggle — Free courses, datasets, and competitions. Excellent for getting hands-on experience and seeing how practitioners approach problems.

Papers With Code — If you get deeper into ML, this tracks research papers alongside code implementations. Not for beginners, but worth knowing about.

Frequently Asked Questions

Do I need to know math to learn AI?

It depends on your goal. Using AI tools: no math required. Building applications with AI APIs: minimal math, more programming. Training your own models and understanding how they work: yes, linear algebra and calculus become important. Most beginners can start before their math is solid and develop it alongside.

How long does it take to learn AI from scratch?

To get a working foundation in ML — enough to run projects, understand what you're doing, and continue learning independently — expect 6–12 months of consistent work. Becoming a research-level practitioner takes longer. The good news is that the field is well-documented and most of the best resources are free.

What's the difference between AI, machine learning, and deep learning?

These are nested terms. AI is the broadest category — any system that performs tasks requiring human-like reasoning. Machine learning is a subset of AI focused on learning from data rather than explicit programming. Deep learning is a subset of machine learning using neural networks with many layers. Most of what's impressive in AI today is deep learning.

Is Python the only language I need?

For most ML and AI work, yes. Python is dominant in the field, and almost all major libraries (TensorFlow, PyTorch, scikit-learn, Hugging Face) are Python-first. Some applications also use JavaScript for browser-based AI or C++ for performance-critical inference, but Python is the right starting point.

Can I learn AI without a computer science degree?

Yes. Many working AI practitioners don't have CS degrees. What matters is whether you can understand and apply the concepts. The field has enough online resources and open-source tools that self-directed learning is genuinely viable.