How AI Converts Your Study Notes Into a Podcast Episode

·7 min read

Written notes are the dominant format for capturing course content. They're efficient to create, easy to search, and widely supported by study tools. But they require you to sit at a desk and actively read — which limits when and how you can review them.

In 2026, AI tools can convert written study notes into spoken audio that sounds like a podcast episode: structured, natural-sounding, and suitable for review while commuting, exercising, or winding down. The technology behind this is more sophisticated than basic text-to-speech, and the results are more useful than most students expect.

The Technology Stack Behind Notes-to-Podcast Conversion

Converting notes to a listenable podcast involves three distinct AI processes working in sequence:

1. Content Restructuring

Study notes are typically written in a format optimised for reading and scanning: bullet points, abbreviations, dense references, fragmented sentences. This format doesn't translate well to audio — a listener can't scan back or re-read a confusing line.

The first stage is content restructuring: AI rewrites the notes into a format designed for listening. Short sentences. Explicit signposting ("Moving on to the second concept..."). Definitions stated clearly rather than implied. Context that a reader would infer from surrounding structure but a listener needs to hear directly.

This restructuring is handled by large language models. The quality of this stage determines most of the output's usefulness.

2. Natural Language Generation

After restructuring, the content is often enriched: transitions between topics are added, examples are generated to illustrate abstract concepts, and the overall narrative arc is shaped to feel like an explanation rather than a list.

This is where the "podcast feel" comes from — the sense that someone is explaining the material to you rather than reading notes aloud. The best systems produce output that sounds like a knowledgeable tutor recording a quick walkthrough of the material.

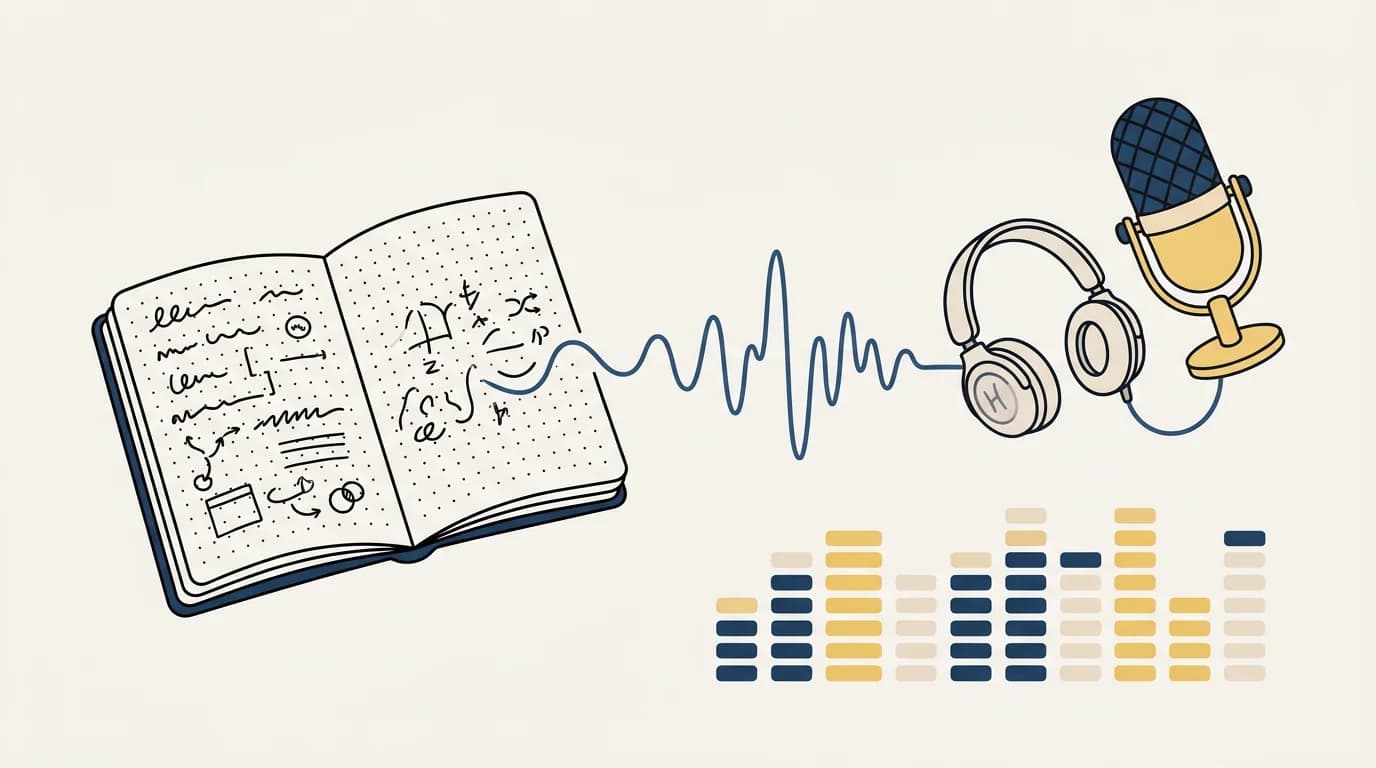

3. Text-to-Speech Synthesis

The final stage converts text to audio. Modern TTS systems — including those from ElevenLabs, OpenAI, and Google — produce speech that's increasingly difficult to distinguish from a human recording. Voice variety (different speakers for different sections), natural pacing, appropriate emphasis on key terms, and pronunciation handling for technical vocabulary are all standard in 2026 professional-grade TTS.

The output is typically an MP3 or WAV file you can listen to on any device.

What Makes Audio Learning Effective (When It Works)

Audio learning is not universally effective. Research on modality-specific learning is mixed, and the idea that students have fixed "auditory learning styles" is not well supported. What is supported:

Spacing and review benefit: Reviewing material in audio form, particularly in a new context (commuting, walking), adds a spaced review session without requiring dedicated desk time. The spacing effect — distributed review producing better retention — applies regardless of format.

Complementary encoding: Encountering the same material in different formats (reading your notes, then hearing them summarised) involves different cognitive processing. Some research suggests this dual encoding improves retention compared to single-format review.

Accessibility: For students with dyslexia, visual fatigue, or attention challenges with text, audio formats make content meaningfully more accessible.

Passive review opportunity: Not all review needs to be active recall. Hearing a summary of material before sleep or during exercise is genuinely better than no review, even if it doesn't replace testing yourself.

What audio learning doesn't do well: replace active retrieval practice. Listening to your notes doesn't produce the same retention effects as being tested on them. It's a useful complement, not a substitute.

How to Generate an AI Podcast from Your Notes

Several approaches exist in 2026:

Using a Dedicated Notes-to-Podcast Tool

Services like Notta, Speechify, and purpose-built AI tools accept uploaded notes and output audio summaries. Upload your PDF or text file, specify the preferred length and style, and download the audio.

Quality varies significantly. The best tools restructure content for audio consumption rather than reading notes aloud with a synthetic voice.

Using General AI + TTS in Sequence

A flexible workflow:

-

Paste your notes into an AI assistant (ChatGPT, Claude) and prompt: "Rewrite these notes as a 10-minute conversational script designed for audio, as if a tutor is explaining the key concepts to a student. Include transitions and expand on definitions."

-

Copy the generated script into a TTS service (ElevenLabs, Google TTS, OpenAI TTS) to generate the audio file.

This two-step approach gives you more control over the output style and length, at the cost of more manual steps.

Using CuFlow

CuFlow processes your uploaded course materials and generates both written summaries and audio-optimised review content. The audio output is designed to complement your active study sessions — not replace them, but extend your review into time you couldn't otherwise use for studying.

The integration matters: because CuFlow tracks what you know and don't know, it can prioritise the content you most need to review in the audio summary, rather than covering everything equally.

When Audio Review Genuinely Helps

Based on how the technology works and the cognitive science behind review:

High value:

- Commuting or travel time you can't use for active study

- First review of material before you begin active recall sessions (orientation before drilling)

- Final-day review before an exam when cognitive load from active study is high

- Subjects with a lot of narrative content — history, literature, economics — that benefits from conversational explanation

Lower value:

- Replacing active recall and spaced repetition sessions

- Dense quantitative or procedural content (calculus, chemistry mechanisms) where audio doesn't convey the logic as well as working through problems

- Subjects where terminology is highly technical and TTS pronunciation is unreliable

The Limitations to Know

Accuracy depends on your input: AI restructures and interprets your notes. If your notes contain errors or ambiguities, the audio output may propagate or amplify them. Review AI-generated content against your source materials, especially for technical subjects.

Passive listening is not retrieval: Hearing a concept explained does not produce the same memory strengthening as trying to retrieve it yourself. Audio review is most useful as a complement to active recall, not a replacement.

TTS quality on technical vocabulary: Pronunciation of domain-specific terms, equations, and non-English words is improving but still unreliable. Medical and scientific content in particular may have pronunciation errors worth correcting before listening.

FAQ

What is the best app to turn notes into a podcast?

For students, CuFlow generates audio review content from uploaded course materials with study-context awareness. For maximum voice quality, a two-step workflow — AI restructuring plus ElevenLabs TTS — produces professional results. Speechify is a popular option for direct text-to-audio without restructuring.

Is listening to your notes as good as reading them?

For retention, neither is as effective as testing yourself. Audio and reading produce similar retention outcomes for most content. Audio is more useful when it enables review in contexts where reading isn't possible (commuting, exercising). Combine audio review with active recall sessions for best results.

How long does it take to convert notes to audio?

Most AI tools generate audio from standard lecture notes in under two minutes. Longer documents take proportionally longer. Fully scripted, narrated-quality output from a manual two-step workflow takes more time but produces better results for complex content.

Can AI create a two-host podcast from my notes?

Yes. Some tools (including Google's NotebookLM) generate dialogue-format audio with two AI voices discussing the content in a conversational format. This can feel more engaging than a single narrator for long review sessions. Quality of the dialogue generation varies.

Does listening to study notes improve memory?

Compared to no review, yes — any engagement with material is better than none. Compared to active retrieval practice, no. Audio review is most valuable as a spaced review layer that doesn't replace active recall but extends review into time otherwise unavailable for studying.

How accurate is AI at converting technical notes to audio?

Accuracy in content restructuring is generally high for well-written notes. Accuracy in pronunciation varies — TTS systems in 2026 handle most standard academic vocabulary well but struggle with highly technical terms, non-English words, and equation descriptions. Pre-listening review of generated scripts catches most significant errors.